As a dual citizen of Canada and Hungary, I am of course delighted to hold an EU passport. Even though I have no plans to do so in the foreseeable future, it is nice to know that I have freedom of movement within the EU, and that in most places I could also claim permanent residence and work.

Unfortunately, as a person firmly committed to the values and interests of our Western alliance, I am increasingly concerned about Viktor Orban’s antics. His coziness with Russia’s dictator, his willingness to embrace undemocratic, “illiberal” policies for the sake of holding on to power, his warm relationship with Trump, his misuse of his position as Hungary holds the rotating EU presidency, exemplified by his rogue visits to Moscow and Beijing… Plain and simple, he is becoming a security threat to the Western alliance.

I have often called Orban in the past a horse trader (a particularly apt expression in the Hungarian language is “lókupec”). The implication, of course, was that even as Orban is deeply corrupt and unscrupulous, he is driven in the end by rational self-interest, and thus remains predictable and reliable.

But lately, I’ve been wondering if that is still true. What we are witnessing, I do not fully comprehend. Is he a Putin asset? Did he simply bet on the wrong horse this time around, now doubling down on a bad bet?

Whatever it is, he is not only doing tangible harm to his own country and, of course, the Western alliance, he is also making amateurish mistakes. The most recent example concerns his journey to Kyiv and Moscow. Parading around as a champion of peace, he forgot to talk to his hosts about the one thing that can severely impact Hungary’s economy: The uninterrupted supply of Russian oil through a pipeline that traverses Ukrainian territory. Oops!

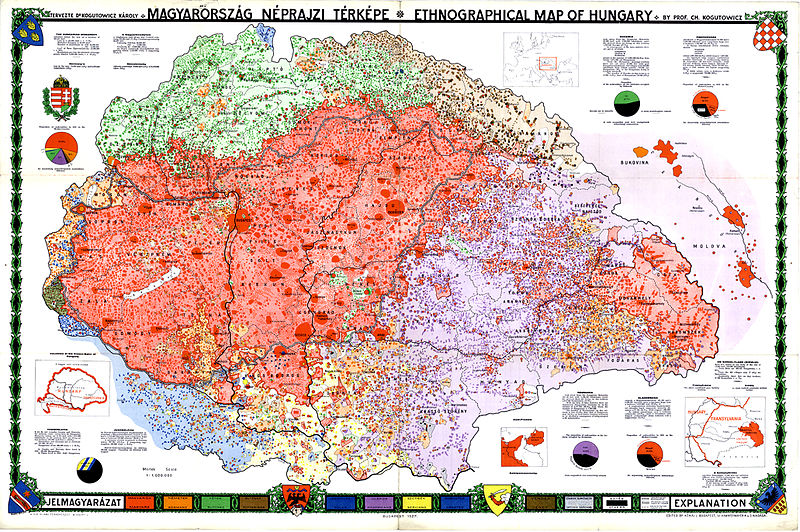

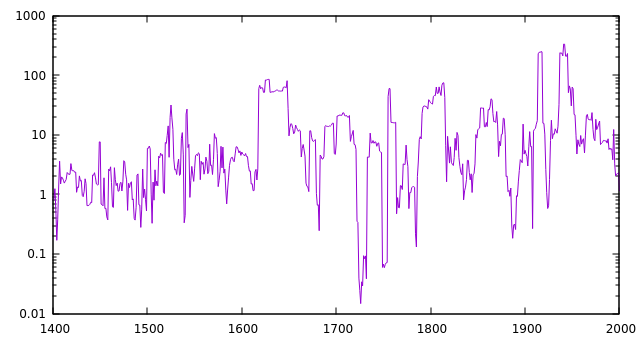

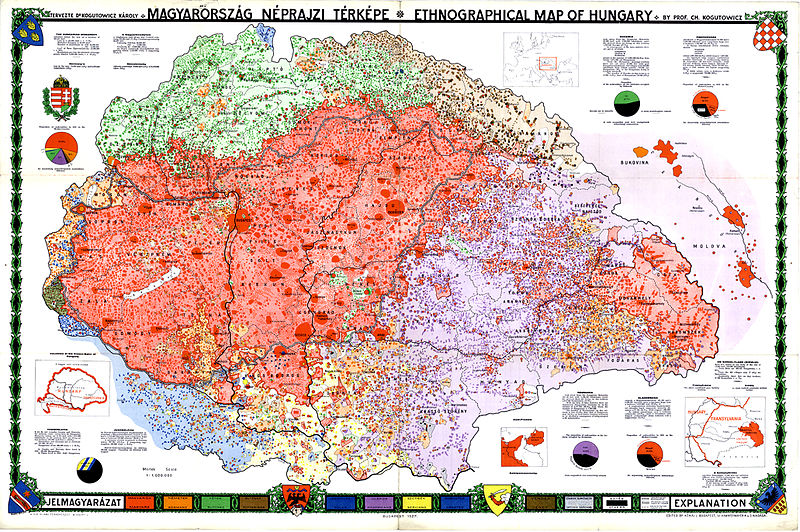

Orban is now widely despised in the West and with good reason. At home, however, he remains firmly in charge. The secret, beyond his “illiberal” concentration of powers and his success at undermining independent media and the independence of the judiciary, is the flawed historical self-assessment of his nation. Many Hungarians still view themselves as victims of the Paris peace treaty of 1919, which they see as massively unfair, robbing the country of roughly two thirds of its historical territory. Which undeniably happened, of course, but context is everything. The last time those historical borders of Hungary existed as the borders of an independent political entity was in 1526, when Hungary suffered a devastating loss fighting the Ottoman Empire (a self-inflicted wound, arguably, as it was Hungary that broke a peace treaty with the Ottomans.) Fast forward to the 20th century: we have a map created by a famous Hungarian cartographer, Károly Kogutowitz who, using data from the last pre-war census of 1910, compiled this ethnographic map of the country:

Although there are clearly visible areas of the map outside the country’s present-day borders that had majority Hungarian populations, the borders are roughly in the right place. We can argue about specific patches of land in the border regions of Slovakia, in northern Transylvania, and a few other locations (hey, my father’s family is from Temesvár, now Timisoara, Romania, and I’ve had friends and relatives from famous, formerly Hungarian towns like Kolozsvár/Cluj or Marosvásárhely/Tirgu Mures, so it’s not like I am unaware of their plight, especially under Ceausescu’s regime), but one thing is clear: most of the territory of the historical Kingdom of Hungary was not dominated by a Hungarian ethnic majority. Should not be surprising: medieval kingdoms were not ethnic nation-states. Whether or not it is wise to base borders on ethnicity is another question, but so long as we accept that premise, the borders speak for themselves: they may not be fair but they certainly represent ethnic realities far more closely than the historical borders many Hungarians still dream about.

Orban of course can whip up nationalist feelings. He can easily explain his stance on Ukraine to his domestic audience by alluding to how badly ethnic Hungarians are supposedly treated in that country. The Orban of the past: the young leader of a youthful movement (Fidesz stands for Alliance of Young Democrats in Hungarian) is long forgotten. Instead, we now have a leader that adores Russia’s dictator. A leader whose actions appear to echo a past when, nearly a century ago, another Hungarian leader, Horthy, maneuvered the country into a foreign policy cul-de-sac. I fear that something similar is going to happen again, and the country will suffer, just as it suffered during that fateful winter of 1944-45 when it was ravaged by war and by brutal Nazi rule, only to be followed by more than four decades of communist oppression.