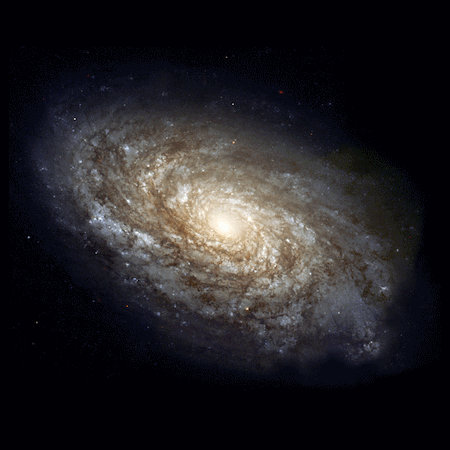

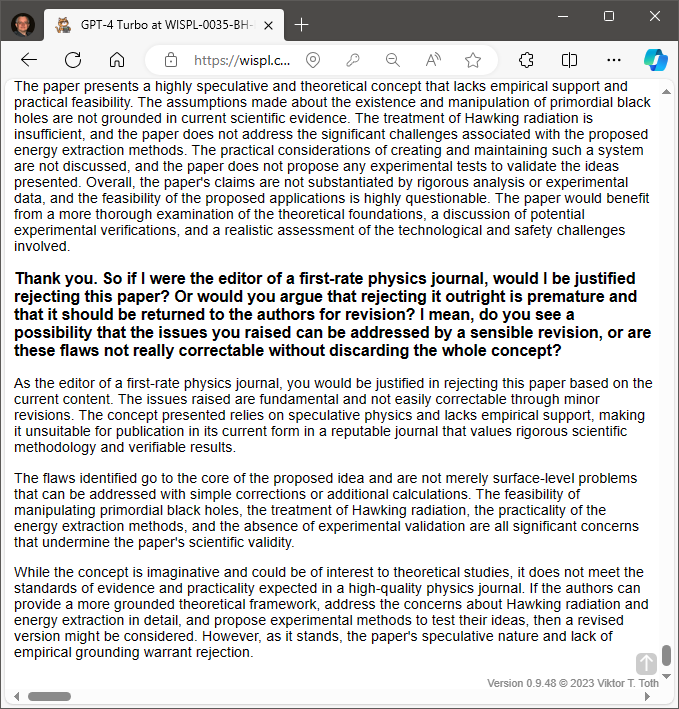

\(\renewcommand{\vec}[1]{\boldsymbol{\mathrm{#1}}}\)This is probably my most ambitious paper to date. It’d be a lie to suggest that I was not worried: what am I missing?

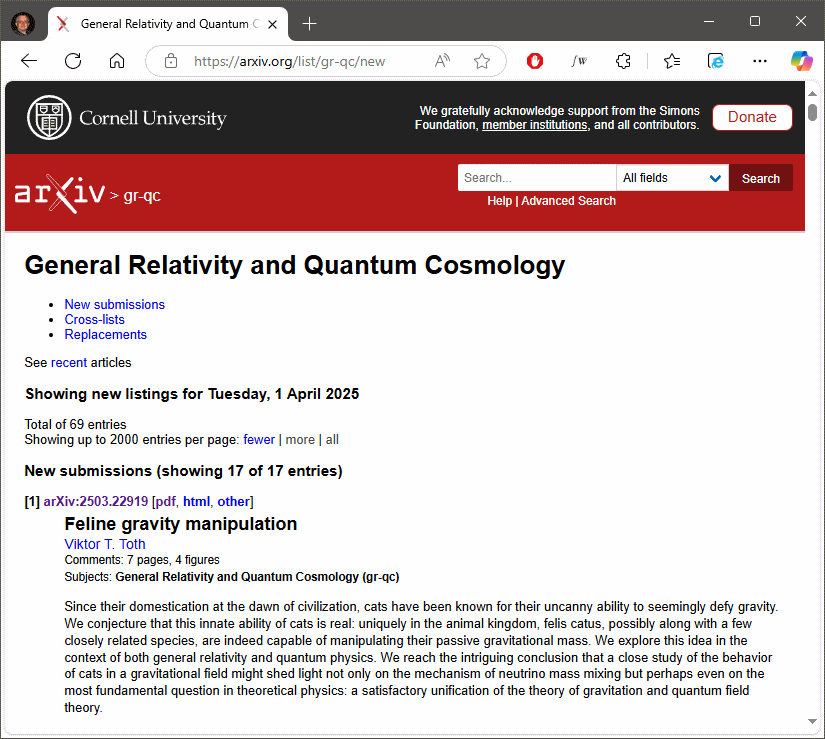

Which is why I have to begin by showing my appreciation to the editors of Classical and Quantum Gravity who, rather than dismissing my paper, recognized its potential value and invited no fewer than four reviewers. Much to my (considerable) relief the reviewers seemed to agree: What I am doing makes some sense.

One of my cats, helping me to understand gravity.

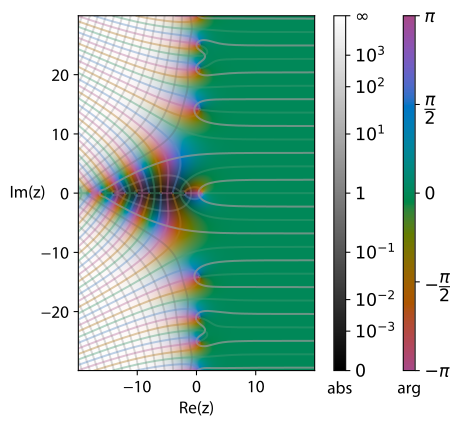

What exactly am I doing? Well, as everyone (ok, everyone with at least a casual interest in general relativity) knows, the gravitational field doubles as the metric of spacetime. And we know that the metric is a “symmetric” quantity: the distance from \(A\) to \(B\) is the same as the distance from \(B\) to \(A,\) and this does not change even when the “distance” in question is the spacetime interval, the infinitesimal proper time between neighboring events.

So we treat the metric as symmetric, which greatly simplifies calculations.

Alternatively, we may treat the metric as not symmetric. Einstein spent the last several decades of his life working on a theory using a nonsymmetric metric, which, he hoped, could have led to a unification of the theories of gravitation and electromagnetism. It didn’t.

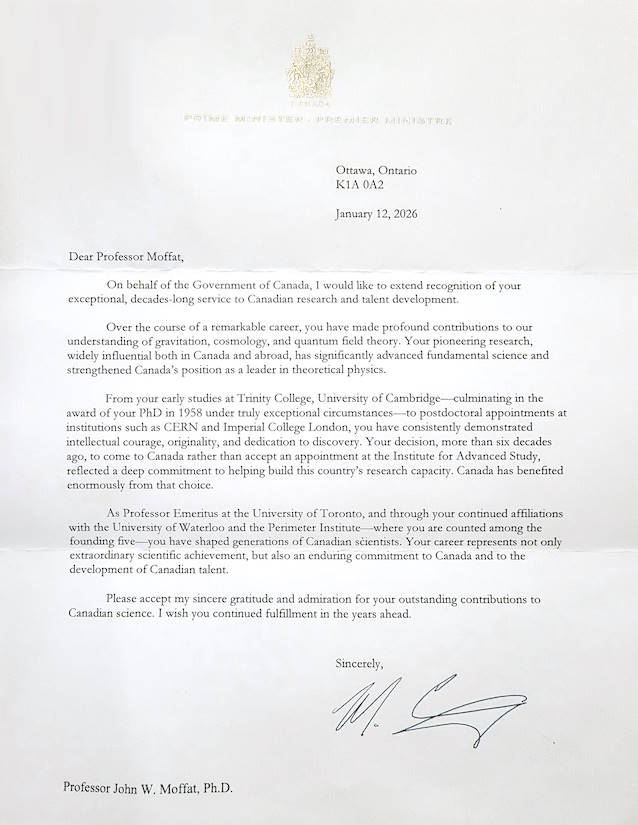

John Moffat also spent a considerable chunk of his professional life working on his nonsymmetric gravitational theory (NGT). Unlike Einstein, Moffat assumed that the extra degrees of freedom are also gravitational and may lead to a large-scale modification of the expression for gravitational acceleration, potentially explaining riddles like the rotation curves of galaxies.

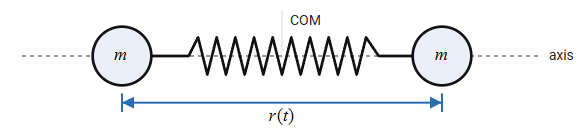

But herein lies the puzzle. A self-respecting field theory these days is usually written down by way of a Lagrangian density, with the corresponding field equations derived using the so-called action principle. In the case of general relativity, this Lagrangian density is called the Einstein-Hilbert Lagrangian. The field that is the subject of this Lagrangian is the gravitational field. Unless we are interested in Einstein’s unified field theory or Moffat’s NGT, we assume that this field has the requisite symmetry that is characteristic of a metric.

Except that at no point do we actually inform the machinery behind the action principle, namely the methods of the calculus of variations, that the field has this property. Rather, in standard derivations we just impose this constraint “by hand” during the derivation itself. This approach is mathematically inconsistent even if it leads to the desired, expected result.

Usually, a restriction that constrains the degrees of freedom of a physical system is incorporated into the Lagrangian using what are called Lagrange-multipliers. Why would we not use a Lagrange-multiplier, then, to restrict the gravitational field tensor so that instead of the 16 independent degrees of freedom that characterize a generic rank-2 tensor in four dimensions, we only have the 10 degrees of freedom of a symmetric tensor?

This is precisely what I have done. Not without consternation: After all, no lesser a mathematician than David Hilbert chose not to do this, even though he was very much aware of the technique of Lagrange-multipliers and their utility, which he took advantage of in other contexts while working on relativity theory.

Yet, for 109 years and counting, the symmetry of the metric, though assumed, was never incorporated into the standard Lagrangian formulation of the theory. I honestly don’t know why, but I decided to address this by introducing a Lagrange multiplier term:

\begin{align}

{\cal S}_{\rm grav}=\frac{1}{2\kappa}\int d^4x \sqrt{-g}(R-2\Lambda+\lambda^{\mu\nu}g_{[\mu\nu]}).

\end{align}

There. Variation with respect to this nondynamical term \(\lambda^{\mu\nu}\) yields the constraint, \(g_{[\mu\nu]}=0\). Job done. Except… Except that as a result of introducing this term, Einstein’s field equations are slightly modified, split into two equations as a matter of fact:

\begin{align}

R_{\mu\nu}-\tfrac{1}{2}Rg_{\mu\nu}+\Lambda g_{\mu\nu} &{}= 8\pi G T_{(\mu\nu)},\\

\lambda_{[\mu\nu]} &{}= 8\pi GT_{[\mu\nu]}.

\end{align}

The first of these two equations is just the usual field equation, but with a twist: The stress-energy tensor on the right-hand side is explicitly symmetrized.

But the second! That’s where things get really interesting. The nondynamical term \(\lambda_{[\mu\nu]}\) is unconstrained. That means that the antisymmetric part of \(T_{\mu\nu}\) can be anything. To quote a highlighted sentence from my own manuscript: “Einstein’s gravitational field is unaffected by the antisymmetric part of a generalized stress-energy-momentum tensor.“

Or, to put it more bluntly, the gravitational field does not give a flying fig about matter spinning or rotating. How matter spins or does not spin would be determined by the properties of that matter; gravity does not care.

This was a surprising, potentially profound result. Previously, authors tried to account for the presence of nonvanishing rotation by introducing a variety of tensor formalisms ad hoc. But as my derivation shows, perhaps all that was unnecessary. Matter is free to rotate, insofar as gravity is concerned: the stress-energy tensor does not need to be symmetrical.

Is this result really new? How can that be? What am I missing? These were my thoughts when I submitted my manuscript. Who knows… maybe, just maybe I was not spouting nonsense and stumbled upon something of real importance.

I expect my paper to appear on the pages of CQG in due course [edit: it just did]; I now also submitted the manuscript to arXiv, where it should appear I hope this weekend or early next week.