I got back last night from Phoenix, where I spent a day, invited to give a seminar at the cosmology group of Arizona State University.

When I arranged my journey, I was delighted to learn that Porter Airlines had a direct connection, YOW to PHX. Yay! But wait… I knew it was a long trip, but 5.5 hours? Holy macaroni. And I never flew Porter. So I worried… a budget airline? Crappy service?

I should not have been concerned. At the risk of sounding like a cheap commercial, I have to say, my experience with Porter has been super positive. They were genuinely nice. I mean it. The aircraft was relatively new and clean, the legroom (I bought an exit row ticket) was plenty adequate but it seemed decent in other rows as well, the service on board was friendly, the free snacks and drinks were good (I don’t drink alcohol when flying, but wine and beer were also served for free)… I’d go so far as to suggest that the experience reminded me a bit of what flying used to be like half a century ago, when I first sat on an airplane and when even economy class passengers were treated like minor royalty.

And Phoenix, well, at least the parts of Phoenix that I saw, was very pleasant. I’ve been to Phoenix before but only driving through, not spending any significant time there. Now I spent two nights, and throughout my stay, I cannot recall a single grumpy person. Everyone was smiling, and they went out of their way to be helpful. I was asking some students for directions on the ASU campus when another student, who overheard my question, immediately offered to guide me to the building I was looking for. Later, a member of the hotel staff went out of her way to make sure that I’d find the store I was looking for in the neighborhood.

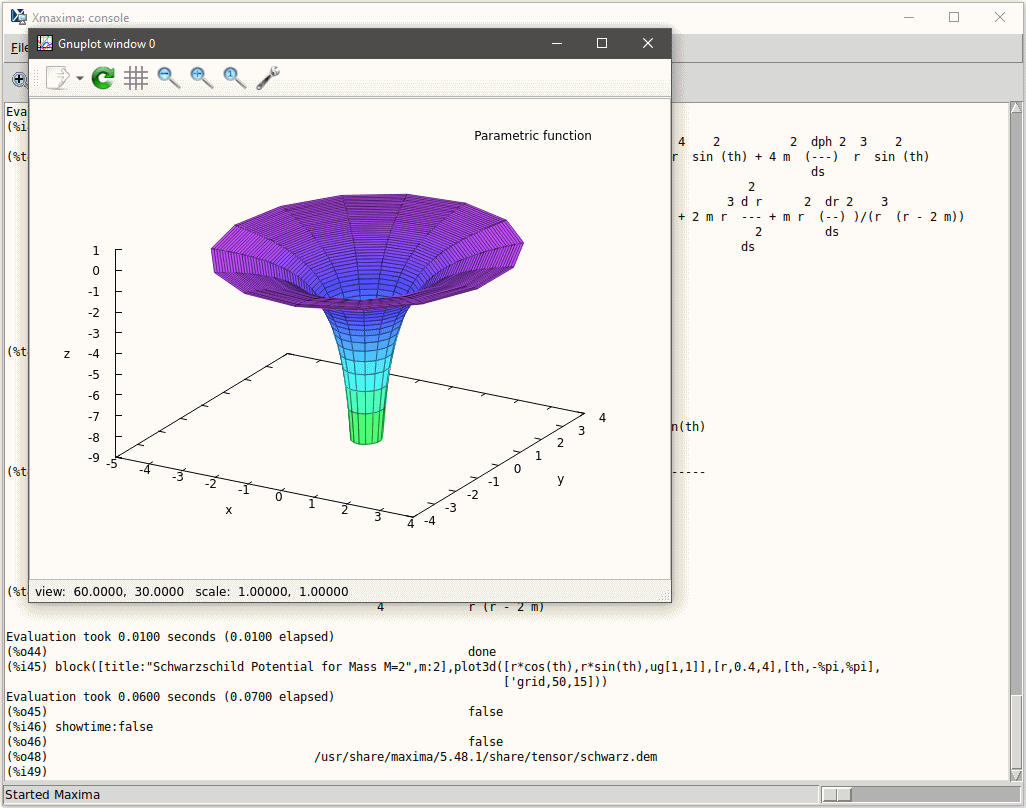

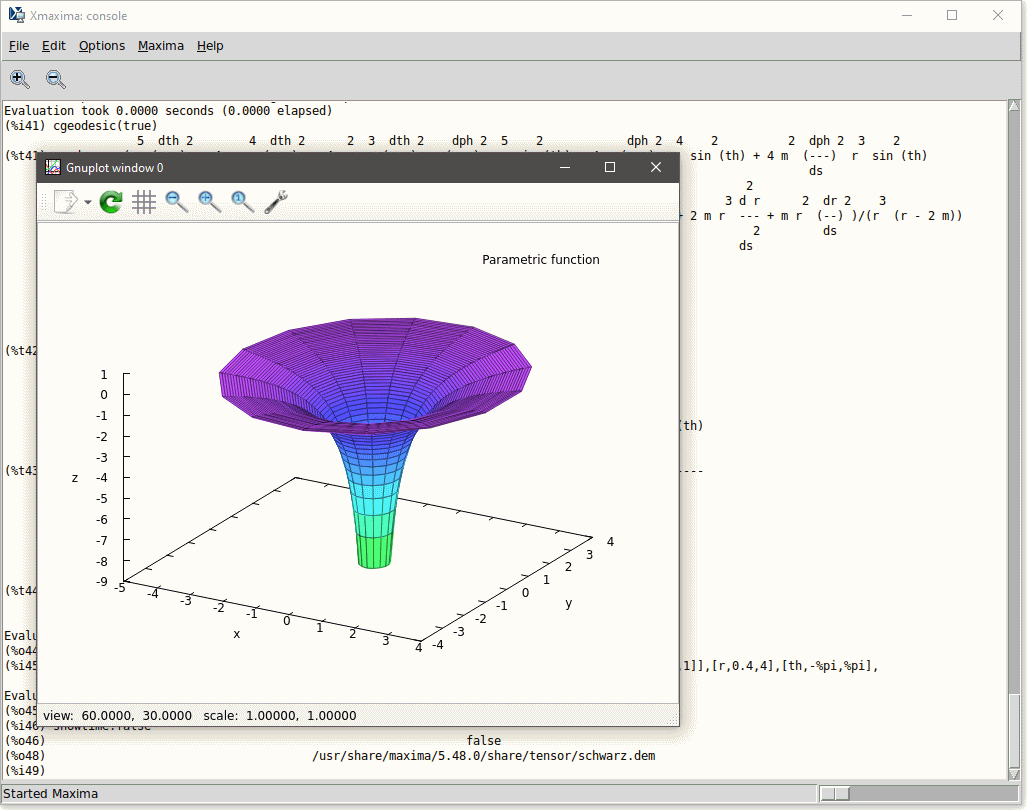

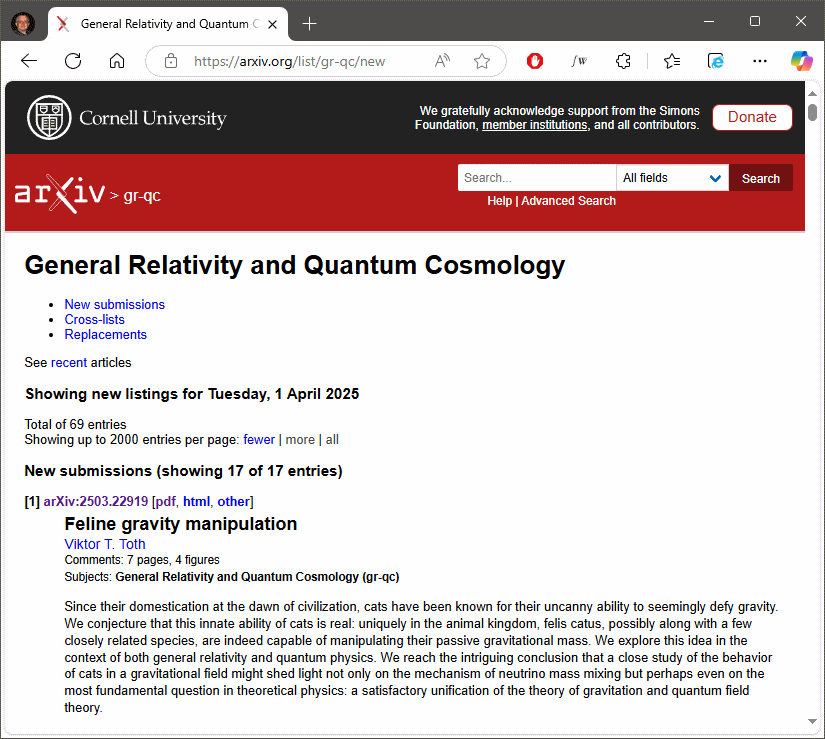

Politics of the day aside, my dislike of travel these days aside, I am glad I accepted the invitation. It was an honor, my hosts treated me with exceptional hospitality, and my talk — which proved to be shorter than intended, mainly because not a single soul interrupted me, something I honestly expected to happen — was well-received. And I was also able to collaborate a bit with my host, to his apparent delight.