In two days, I got two notices of papers being accepted, among them our paper about the possible relationship between modified gravity and the origin of inertia. I am most pleased, because the journal accepting it (MNRAS Letters) is quite prestigious and the paper was a potentially controversial one. The other paper is about Pioneer, and was accepted by Physical Review D. Needless to say, I am pleased.

I’ve read a lot about the coming “digital dark age”, when much of the written record produced by our digital society will no longer be readable due to changing data formats, obsolete hardware, or deteriorating media.

But perhaps, just perhaps, the opposite is happening. Material that is worth preserving may in fact be more likely to survive, simply because it’ll exist in so many copies.

For instance, I was recently citing two books in a paper: one by d’Alembert, written in 1743, and another by Mach, from 1883. Is it pretentious to cite books that you cannot find at any library within a 500-mile radius?

Not anymore, thanks, in this case, to Google Books:

Jean Le Rond d’ Alembert: Traité de dynamique

Ernst Mach: Die Mechanik in ihrer Entwickelung

And now, extra copies of these books exist on my server, as I downloaded and I am preserving the PDFs. Others may do the same, and the books may survive so long as computers exist, as copies are being made and reproduced all the time.

Sometimes, it’s really nice to live in the digital world.

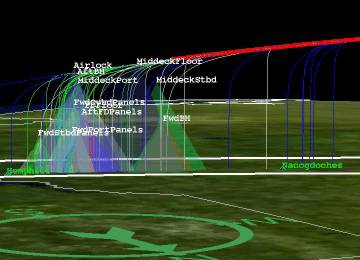

The other day, I put my latest (well, I actually did it last summer, but it’s the latest that has seen the light of day) Pioneer paper on ArXiv.org; it is not about new results (yet), just a confirmation of the Pioneer anomaly using independently developed code, and a demonstration that a jerk term may be present in the data.

Once again, I am studying classical thermodynamics. Axiomatic thermodynamics to be precise, none of this statistical physics business (which is interesting on its own right, but it is quite a different topic.)

The more I learn about it, the more I find thermodynamics incredibly fascinating. Why is it so different from other areas of physics? Perhaps I now have an answer that may be trivial to some, but eluded me until now.

Most of physics is described by functions of coordinates and time. This is true even in the case of general relativity, even as the coordinate system itself may be curved, the curvature (the metric) is described as a function of space-time coordinates.

In contrast, there are no coordinates in axiomatic thermodynamics, only states. States are decribed by state variables, and usually you have these in excess. For instance, the state of one mole of an ideal gas is described by any two of the three variables p (pressure), V (volume) and T (temperature); once two of these are known, the third is given by the ideal gas equation of state, pV = KT, where K is a constant.

Notice that there is no independent variable. The variables p, V, and T are not written as functions of time. Nor should they be, since axiomatic thermodynamics is really equilibrium thermodynamics, and when a system is in equilibrium, it is not changing, its state is constant.

So why is it not called thermostatics? What does dynamics have to do with stationary states? As it turns out, thermodynamics is the science of fitting a square peg in a round hole, as having just established that it’s a science of static states, it nevertheless goes on to explain how states can change… so long as all the intermediate states can exist as static states on their own right, such as when you’re heating a gas slowly enough so that its temperature is more or less uniform at all times, and its state is well approximated by thermodynamic variables.

The zeroeth law states that an empirical temperature exists that is associative: systems that have the same temperature form equivalence classes.

The first law defines the (infinitesimal) quantity of heat dQ as the sum of changes in internal energy (dU) and mechanical work (p dV). An important thing about dQ is that there may not be a Q; in the jargon of differential forms, dQ is a Pfaffian that may not be exact.

The second law uses the assumption of irreversibility and Carathéodory’s theorem to show that there is an integrating denominator T and a function S such that dQ = T dS. (Presto, we have entropy.) Further, T is uniquely determined up to a multiplicative constant.

Combined, the two laws can be written in the form dU = T dS − p dV. After that, much of what is in the textbooks about classical thermodynamics can be written compactly in the form of the Jacobian determinant ∂(T, S)/∂(p, V) = 1.

Given that I know all this, why do I still find myself occasionally baffled by the simplest thermodynamic problems, such as convincing myself that when an isolated system of ideal gas expands, its temperature remains constant? (It does, the math says so, textbooks say so, but still…) There is something uniquely non-trivial about axiomatic thermodynamics.

The other day, arXiv.org split a popular category, astro-ph, into six subcategories. This is convenient… astro-ph, the astrophysics archive, was getting rather large, and the split into sub-categories makes it easier to find papers that are relevant to one’s specialization.

On the other hand… it also means that one is less likely to read papers that are not directly relevant to one’s specialization, but may be interesting, eye-opening, and may help to broaden one’s horizons. Is this a good thing?

There are no easy answers of course… the number of papers just on arXiv.org is mind-boggling (they proudly announced that they’ve passed the half million paper milestone on October, with thousands of new papers added every month) and no one has the time to read them all. Hmmm, perhaps I should have spent more time applauding a recent initiative by Physical Review, their This Week in Physics newsletter and associated Web site.

“John Moffat is not crazy.” These are the opening words of Dan Falk’s new review of John’s book, Reinventing Gravity, which (the review, that is) appeared in the Globe and Mail today. It is an excellent review, and it was a pleasure to see that the sales rank of John’s book immediately went up on amazon.ca. As to the opening sentence… does that mean that I am not crazy either, having worked with John on his gravity theory?

This is what Windows Vista’s weather gadget told me this morning:

Slightly exaggerated

Fortunately, it is lying. It’s only -29 outside, not -35. Still, it made me remember fondly the good ole’ days when there was still some global warming…

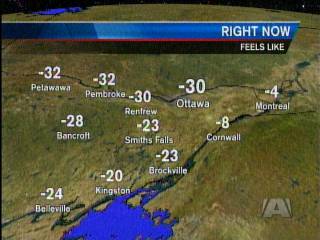

Wow. Look at this temperature gradient between Ottawa and Montreal:

Arctic cold front

And while these are wind chill temperatures, the real thing is soon to follow: some stations forecast a temperature of -33 Centigrade Friday morning. Needless to say, global warming is not exactly high on the list of priorities of most people I know.

Here’s an article worthy of a bookmark:

http://peltiertech.com/Excel/Charts/XYAreaChart2.html

It offers a way to produce a chart in Microsoft Excel much like this one:

Filled XY area chart

This chart is from something I’m working on, an attempt to test gravitational theories against galaxy survey data.

The link above also comes with a warning: the discussed technique doesn’t work with Excel 2007, due to a (presumably unintentional) change in Excel’s handling of certain complex charts. A pity, but it is also a good example why I am trying to maintain my immunity against chronic upgrade-itis. Two decades ago upgrades were important because they fixed severe bugs and offered serious usability improvements. But today? Why on Earth would I want to upgrade to Office 2007 when Office 2003 does everything I need and more, just so that I can re-learn its user interface? Or make Microsoft richer?

I just read this term, “paparazzi physics”, in Scientific American. Recently, several papers were published on the PAMELA result referencing not a published paper, not even an unpublished draft on arxiv.org, but photographs of a set of slides that were shown during a conference presentation. An appropriate description! But, I think “paparazzi physics” can be used also in a broader sense, describing an alarming trend in the physics community to jump on new results long before they’re corroborated, in order to prove or disprove a theory, conventional or otherwise.

I am starting the new year by reading about a substantial piece of cryptographic work, a successful attack against a widely used cryptographic method for validating secure Web sites, MD5.

That nothing lasts forever is not surprising, and it was always known that cryptographic methods, however strong, may one day be broken as more powerful computers and more clever algorithms become available. What I find astonishing, however, is that even though this particular vulnerability of MD5 has been known theoretically for years, several of the best known Certification Authorities continued to use this broken method to certify secure Web sites. This is hugely irresponsible, and should a real attack actually occur, I’d not be surprised if many lawsuits followed.

The theory behind this attack is complicated, and the hardware is substantial (200 Playstations used as a supercomputing cluster were required to carry out the attack.) One basic reason why the attack was possible in the first place has to do with the “birthday paradox”: it is much easier to construct a fake certificate that has the same signature as a valid certificate than it is to recover the original cryptographic key used to sign the valid certificate.

This has to do with the probability that two persons at a party have the same birthday. For a greater than 50% chance that another person at a party has your birthday, the party has to be huge, with more than 252 guests. However, the probability that at a given party, you find at least two people who share the same birthday (but not necessarily yours) is greater than 50% even for a fairly small party of just over 22 guests.

This apparent paradox is not hard to understand. When you meet another person at a party, the probability that he has the same birthday as you is 1/365 (I’m ignoring leap years here.) The probability that he does NOT have the same birthday as you, then, is 364/365. The probability that two individuals both do NOT have the same birthday as you is the square of this number, (364/365)2. The probability that none of three separate invididuals has the same birthday as you is the cube, (364/365)3. And so on, but you need to go all the way to 253 before this results drops below 0.5, i.e., that the probability that at least one of the people you meet DOES have the same birthday as you becomes greater than 50%.

However, when we relax the condition and no longer require a guest to have the same birthday as you, only that there’s a pair of guests who happen to share their birthday, we need to think in terms of pairs. When there are n guests, they can form n(n – 1)/2 pairs. For 23 guests, the number of pairs they can form is already 253, and therefore, the probability that at least one of these pairs has a shared birthday becomes greater than 50%.

On the cryptographic front, what this basically means is that even as breaking a cryptographic key requires 2k operations, a much smaller number, only 2k/2 is needed to create a rogue cryptographic signature, for instance. It was this fact, combined with other weaknesses of the MD5 algorithm, that allowed these researchers to create a rogue Certification Authority certificate, with which they can go on and create rogue secure certificates for any Web site.

This is a sad picture:

Yesterday, NASA released its final report about the Columbia accident, complete with gruesome but necessary details about how seven astronauts died.

… these were the words with which the crew of Apollo 8 wished a Merry Christmas to the citizens of Earth, during the 9th lunar revolution, some 380,000 kilometers from their home planet… 40 years ago today.

Earthrise from Apollo 8

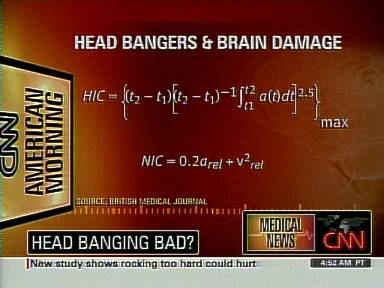

This is not what I usually expect to see when I glance at CNN:

CNN and integrals

It almost makes me believe that we live in a mathematically literate society. If only!

The topic, by the way, was a British Medical Journal paper on brain damage caused by a dancing style called headbanging. I must say, even though I grew up during the disco era, I never much liked dancing. But, for what it’s worth, I not only know how to do integrals, I actually enjoy doing them…

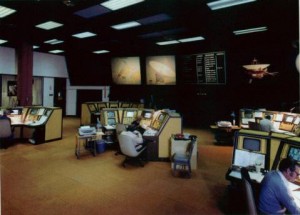

I don’t know, maybe it’s just me, but there is something magically beautiful in vintage 1960s high tech. Take JPL’s Space Flight Operations Center, for instance.

Sure, wall-size LCD or plasma screens are nowadays a dime a dozen, and as to the computing equipment in that room, hey, my watch probably has more transistors. Still… there is something awe-inspiring in this picture that is just not there when you walk into a Best Buy.

The prevailing phenomenological theory of modified gravity is MOND, which stands for Modified Newtonian Dynamics. What baffles me is how MOND managed to acquire this status in the first place.

MOND is based on the ad-hoc postulate that the gravitational acceleration of a body in the presence of a weak gravitational field differs from that predicted by Newton. If we denote the Newtonian acceleration with a, MOND basically says that the real acceleration will be a‘ = μ(a/a0)a, where μ(x) is a function such that μ(x) = 1 if x >> 1, and μ(x) = x if μ(x) << 1. Perhaps the simplest example is μ(x) = x/(1+x).

OK, so here is the question that I’d like to ask: Exactly how is this different from the kinds of crank explanations I receive occasionally from strangers writing about the Pioneer anomaly? MOND is no more than an ad-hoc empirical formula that works for galaxies (duh, it was designed to do that) but doesn’t work anywhere else, and all the while it violates such basic principles as energy or momentum conservation. How could the physics community ever take something like MOND seriously?

It appears that the heavens have a sense of humor after all… why else would they be smiling like that?

In recent weeks, there has been a lot of discussion in the physics community about a curious observation: an abundance of energetic positrons observed by a satellite named PAMELA. According to some people, this unexpected abundance of positrons is likely caused by annihilation of massive dark matter particles, constituting “smoking gun” evidence that dark matter really exists.

Of course it is possible that this abundance is due to some conventional astrophysics, such as pulsars doing this or that. This is a subject of on-going dispute.

One thing I do not see discussed is that the anticipated behavior is apparently based on a model developed several years ago that uses as many as eleven adjustable parameters yet nevertheless, does not produce a spectacularly good fit of even the low energy data. I wonder if I am missing something.

Looks like Stephen Hawking is coming to Waterloo. I may not be an adoring fan, but I am certainly an admirer: being able to overcome such a debilitating disease and live a creative life is no small accomplishment even when you don’t become a world class theoretical physicist in the process.

A new paper by Sean Carroll asks this question in its title “What if time really exists?” I feel reassurred that Carroll thinks it does (hmmm, let me check my watch… yup, I think it exists, too) but the fact that a paper with this title appears in an archive of theoretical physics papers perhaps illustrates what is so wrong with physics today. To quote Carroll, “when something is so obvious and important, declaring that it isn’t real is sure to win points for boldness”, but have physicists really become this shallow?