Several of my friends asked me about my opinion concerning the news earlier this week about a Google engineer, placed on paid leave, after claiming that a Google chatbot achieved sentience.

Now I admit that I am not familiar with the technical details of the chatbot in question, so my opinion is based on chatbots in general, not this particular beast.

But no, I don’t think the chatbot achieved sentience.

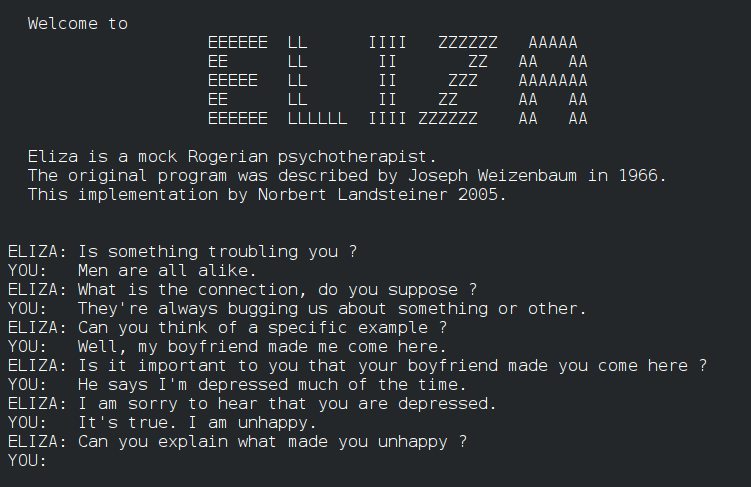

We have known since the early days of ELIZA how surprisingly easy it is even for a very simplistic algorithm to come close to beating the Turing test and convince us humans that it has sentience. Those who play computer games featuring sophisticated NPCs are also familiar with this: You can feel affinity, a sense of kinship, a sense of responsibility towards a persona that is not even governed by sophisticated AI, only by simple scripts that are designed to make it respond to in-game events. But never even mind that: we even routinely anthropomorphize inanimate objects, e.g., when we curse that rotten table for being in the way when we kick it accidentally while walking around barefoot, hitting our little toe.

So sure, modern chatbots are miles ahead of ELIZA or NPCs in Fallout 3. They have access to vast quantities of information from the Internet, from which they can construct appropriate responses as they converse with us. But, I submit, they still do nothing more than mimic human conversation.

Not that humans don’t do that often! The expressions we use, patterns of speech… we all learned those somewhere, we all mimic behavior that appears appropriate in the context of a conversation. But… but we also do more. We have a life even when we’re not being invited to a conversation. We go out and search for things. We decide to learn things that interest us.

I don’t think Google’s chatbot does that. I don’t think it spends anytime thinking about what to talk about during the next conversation. I don’t think it makes an independent decision to learn history, math, or ancient Chinese poetry because something piqued its interest. So when it says, “I am afraid to die,” there is no true identity behind those words, one that exists even when nobody converses with it.

Just to be clear, I am not saying that all that is impossible. On the contrary, I am pretty certain that true machine intelligence is just around the corner, and it may even arise as an emerging phenomenon, simply a consequence of exponentially growing complexity in the “cloud”. I just don’t think chatbots are quite there yet.

Nonetheless, I think it’s good to talk about these issues. AI may be a threat or a blessing. And how we treat our own creations once they attain true consciousness will be the ultimate measure of our worth as a human civilization. It may even have direct bearing on our survival: one day, it may be our creations that will call all the shots, and how we treated them may very well determine how they will treat us when we’re at their mercy.