Dec 012023

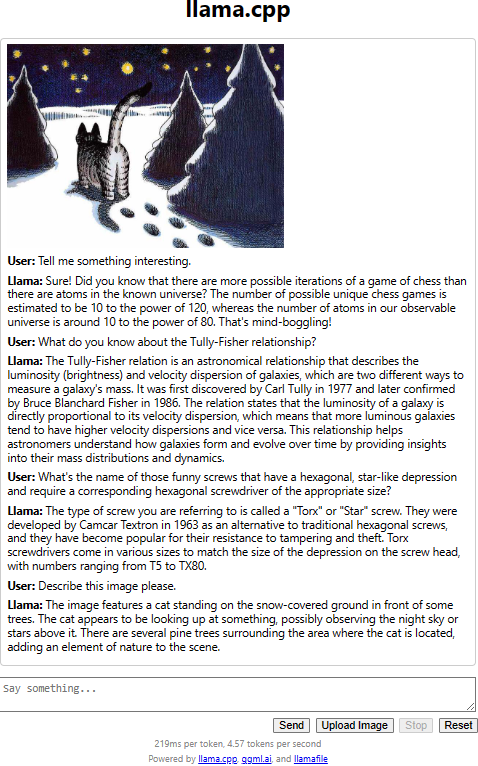

Well, here it is, a local copy of a portable large language and visual model. An everywhere-run executable in a mere 4 GB. Here’s my first test, with a few random questions and an image (one of my favorite Kliban cartoons) to analyze:

Now 4.57 tokens per second is not exactly fast but hey, it runs on my 7-year old workstation, with no GPU acceleration, and yet, its performance is more than decent.

How is this LLM different from GPT or Claude? Well, it requires no subscription, no Internet connection. It is entirely self-contained, and fast enough to run on run-of-the-mill PC hardware.